GenAI is widespread already and seems to be pushed in all industries. There's not a single area in the software industry you're not bumping into it.

As per Gartner, the whole artificial intelligence market will grow to $190 billion by 2025, and GenAI is the new player in town, presumably disrupting the way businesses create data-driven products and courses.

The use of GenAI in software test automation is stirring a debate among the testing community. The idea behind GenAI in test automation is -and you know that from the endeavors in the past- to speed up the testing process and reduce the workload on human testers. But this time it is different.

With its ability to generate text using GPT-4 and other machine learning models, there are two options: It brings you efficiency, or it has the potential to accelerate sh*t.

A short introduction to GenAI first: What is it?

Generative AI is all about models that create new stuff by using what they already know from existing data or patterns. Think of it like creating cool new texts, pictures, tunes, voices, and other kinds of media or data. What's special about generative AI is its ability to be presumably creative and coming up with fresh ideas, going beyond just analyzing or rehashing what's already out there.

We love GenAI if used wisely. For us, the A in AI stands for Augmented, not Artificial, and that makes a tremendous difference. Our approach is to use GenAI to support your daily tasks, not to do the daily tasks for you.

But how exactly does it work in test automation?

In mainstream marketing, you might read the following: GenAI in test automation uses AI technologies to generate test cases and test data, based on learning from existing test cases, user stories, or directly from application interfaces.

These generated test cases are expected to cover a wide range of test scenarios, including edge cases and negative test scenarios.

The hypothesis is that these test cases could be automatically executed by GenAI tools, which would integrate into existing test automation frameworks and dynamically adapt to changes in the application.

These approaches promise to reduce manual effort in test automation, speed up the testing process, and improve software quality through more comprehensive test coverage.

In other words, it takes over your job.

That is not how we at TestResults.io use or see GenAI today (Q* might change everything). Today, we use GenAI to allow you to prompt your test cases, which is a tremendous time saver. We combine it with traditional computer vision, machine learning and LLMs to be able to generate test models and test cases step-by-step in an augmented fashion: You are in the driver's seat, but GenAI is doing most of the work.

And in practical use?

We asked the testing experts themselves to what extent GenAI has the potential to make test automation and testing more efficient in their opinion.

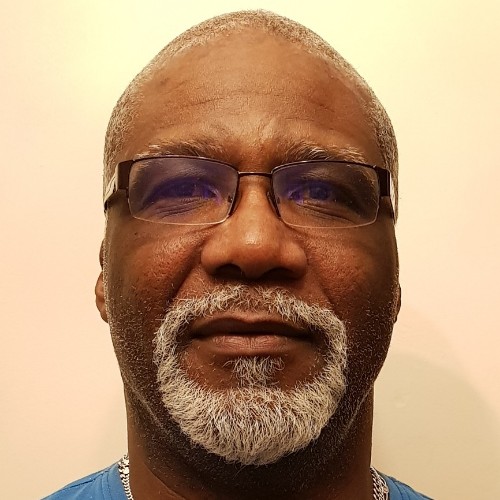

Paul M. Grossman

Automation Testing Expert with Jira, Git Lab, and Allure Experience

Generative AI enhances test automation efficiency through various means. Code analysis, test creation, and even load testing.

The biggest advantage AI can provide is quickly creating concise modular functions to reduce a framework code base. Generative AI expedites development by generating code based on descriptions of functionality, input parameters, and expected output. It often offers guidance on coding style, best practices, and naming conventions.

On a more advanced level, it can analyze requirements and propose testing scenarios, edge cases, and potential gaps. However, a challenge arises when a substantial number of test cases are generated within a short time frame, which may not be feasible to execute in a reasonable amount of time. This challenge can be mitigated by parallel testing in multiple cloud environments, albeit at an additional cost. Ultimately, time is money.

Generative AI can also produce diverse sets of test data, ensuring comprehensive test coverage and uncovering potential issues across a wide range of scenarios. However, this can quickly become a time-consuming endeavor, particularly if we try to test every data combination.

Gen AI has the potential to extend testing with branch or path testing. These tests validate each possible path or branch through the interface to ensure that it functions correctly under various scenarios and conditions. But it also can become a logarithmic nightmare.

Many fear that Skynet will take over the world. This is not a major concern for me, as I've yet to get an AI to follow the rules enough to solve a simple Wordle. If the T-800s do arrive, they are likely to enslave humanity, forcing us to test the operating systems manually.

I am more concerned about the loss of corporate IP from developers trying to optimize their code by pasting their work into online AI sites.

One thing AI will never accurately replace is user acceptance testing. It will never understand good user interface design from a bad one. It will never be able to discern what is regarded as critical data for humans. Today, there will not be monkeys typing randomly at a keyboard, eventually producing all the works of Shakespeare. The greatest downside will be AI generating infinite amounts of poorly designed front-end interfaces and us humans not being patient enough to wait for it to produce a truly good one if we could even recognize it.

Larry Goddard

Creator of klassi-js | Test Automation Architect | QA Advocate | Public Speaker | Mentor | Coach

"Does GenAI make testing and test automation more efficient?"

Short answer: Yes.

Generative AI(GenAI) holds the potential to elevate the effectiveness of test automation within specific scenarios significantly. It provides automated solutions for generating test cases, data, and scripts, yet its efficacy depends on the distinctive needs of the application under examination and the maturity of GenAI's technologies.

GenAI's capacity to scrutinize an application's behavior and prerequisites results in the automatic generation of a substantial number of test cases, thereby enhancing test coverage. Furthermore, it contributes to the upkeep of test scripts, leading to time savings and a reduction in manual effort. For applications that rely on a diverse spectrum of input data, GenAI offers the valuable capability of constructing synthetic datasets that encompass a wide array of scenarios.

In situations where an application's user interface or structural components frequently undergo alterations, GenAI can autonomously adapt the test scripts,effectively mitigating the challenges associated with script maintenance. It can also streamline the configuration of test environments by automating essential setups, encompassing tasks like resource provisioning and configuration generation. Additionally, GenAI has the potential to independently engage in exploratory testing, traversing the application to uncover unforeseen issues.

GenAI presents an enticing prospect for augmenting the efficiency of test automation, particularly in contexts requiring comprehensive automation, adaptability to frequent changes, or the generation of test data. Nonetheless, it is essential to evaluate its adoption thoughtfully, considering the specific demands of the project and the capabilities offered by the available GenAI tools.

Lalitkumar Bhamare

Award-winning Testing Leader | Manager Accenture Song | Lead - Thought Leadership QES Accenture DACH | International Keynote Speaker | Director at Association for Software Testing | CEO Tea-time with Testers

I would first consider talking about what makes testing and automation in testing more efficient, and would then come to GenAI part of it.

Many things matter when we consider testing and automation to be efficient. Efficient testing for me is when a tester finds out information about risk, quality status, and product overalls in such a way that stakeholders can make an informed decision about what to do next. For the stakeholders to be able to do that, this information needs to be provided fast enough and regularly, and it needs to be reliable, i.e., it should stand up to scrutiny.

Whenever expert human judgment and assessment are involved, the chances of that information being reliable are higher. So is the case with automation in testing. For the automation in testing to be efficient, I expect it to provide me with faster, regular, and reliable feedback.

Now, let's come to the GenAI part.

Does GenAI make testing and test automation more efficient?

I would say it cannot be generalized as simplistically as the question makes it sound. GenAI can assist testers in several ways, provided they know how to prompt it effectively and how not to get manipulated/misguided by the output.

For example, the other day, I was designing tests for a medical device, and brainstorming with ChatGPT made me aware of many possibilities I could consider. These possibilities were about different scenarios/domain/technology-specific tests I could benefit from. Were those tests as reliable as they were?

No, I had to apply my human judgment to pick some of those that were suitable for my context.

For the automation part, I have seen examples of self-healing automation, predictive test prioritization, or the usage of AI to make element identification easier,less flaky, and so on. In some cases, generating test data on the fly with the help of AI can be helpful. In my experience, the majority of the problems that make automation less efficient are related to release management, testability, test data management, and infrastructure issues, and less to the actual scripting part. I am yet to explore how GenAI could help there, so I cannot comment on it at the moment.

All in all, I believe that without forgetting its lack of reliability, GenAI can be a useful assistant and sparring partner for testers to expedite certain aspects of their testing activities. However, ultimately, the human tester gets to determine where and how to use it to their advantage.

Jonas Schwammberger

Senior Software Engineer at TestResults.io | Artificial Intelligence & Machine Learning Specialist

Depending on how you use AI, you can achieve a result faster. But not necessarily the right one...

Let me explain.

Software is a mystery, like a black box. That's why we create test cases - to check if this obscure part performs everything correctly.

GenAI has made leaps in terms of interpreting human commands correctly or understanding complex structures like Software.

We might be tempted to treat GenAI like our testing division. We give GenAI our software and our test case and ask 'Hey, perform this test case on my software and produce me a report of the results.

AI is also a black box. If we let AI interpret and execute a test case, we get a quick result.

But it's completely opaque. Because AI, too, is a mysterious black box. Did the AI interpret the test case correctly, and did it execute it properly? We don'tknow!

And if we don't know, there's no point in testing.

Here's an example: Imagine giving an AI the autopilot of a plane to test: "Here's the software; please test it."

The AI reports all is fine. Would you board that plane?

No, neither would I.

Here's why: If I had a human tester test the autopilot and asked him to join me on the plane, he would be accountable. He would only board if he were confident that he had done everything correctly.

On the other hand, the AI doesn't board the plane with me; it's indifferent to the outcome, so to speak. It doesn't shoulder the responsibility with its life.

That's why I wouldn't board the plane if the AI had tested the autopilot.

You have no idea what the AI actually tested or how it tested it. You don't even know IF it tested or just generated a report for you.

You can't expect a comprehensible result when you let two black boxes interact. And traceability is essential in testing. For instance, in a regulated environment,we want to trace every step to understand why a test case failed during execution.

So, giving AI decision-making power is not a good idea because it's intangible.

Then there's the issue of technical dependency. Suppose you get the AI from the internet; then you have no control over changes in that software. Your test case might not work the same way after two years.

But this doesn't mean GenAI can't be usefully integrated in test automation!

Once it generates more answers than questions, it makes absolute sense. For example, in generating test cases where a human can step-by-step prompt their test case, GenAI helps them create test cases faster and with less effort.

A practical example is our generative journey creator, which you can use to prompt a complete user journey step-by-step.

So, my conclusion: Go for GenAI, as long as you retain the decision-making power on whether the automation reflects the test case.

Because humans will not be replaced by AI in software testing for now.

Here is your space!

We are always looking for new perspectives and lively debates.

Do you have something to say on the topic of GenAI? The community wants to read it!

Send an email to andra.radu@progile.ch and give your statement a stage right here!

.png&w=3840&q=75)